本篇是 Adam B Kashlak 老师的 Probability and Measure Theory 课程笔记

Measure Theory

Measures and -Fields

-Field

Question : What sets (subsets of ) am I allowed to measure?

DefinitionFor some set , a -Field is a collection of sets s.t.

- If then

- is closed under union: for a countable collection of sets s.t. for all then

Equivalence : also contains countable intersections because

Measure

DefinitionA measure is a mapping from a -field to the non-negative real numbers s.t.

- For a pairwise disjoint collection then (Countably Additivity)

Special Cases for a Measure Space :

Power Set

DefinitionPower set or is the set of all subsets of

Semi-Ring of Sets

Definition, a collection of subsets of , is called a Semi-Ring when

- If then

- If then there exists a finite number of pariwise disjoint sets for s.t.

Example: all intervals in is a semi-ring

Ring of Sets

Definition, a collection of subsets of , is called a Ring when

- If then

- If then (This implies finite unions are also in )

From the defintion, we know that the intersection of two sets is also in because

ExampleAll finite unions of half open intervals in is a ring

Field

Definitionis a Field if it is a Ring and

EquivalenceSince the whole set is in , then

- the second condition of Ring is equivalent to being closed under complimentation as and

- the third condition of Ring is equivalent to being closed under finite unions as

Note : Field + countable unions -Field

Set Function

DefinitionA set function (not necessarily a measure) is a mapping from a set of functions to . For , we say that

- is monotone if implies

- is additive if for disjoint

- is countably additive if for pairwise disjoint and

- is countably sub-additive if for (not nessarily pairwise disjoint)

- is a pre-measure if is countably additive, and is a ring. For a pre-measure, it is also

- monotone because for we have

- sub-additive because for let then are pairwise disjoint and by monotonicity,

Outter Measure

DefinitionFor a pre-measure on a ring , the outter measure (Not necessarily a Measure) is defined as

for any . This is equal to the smallest possible sum of the pre-measure over all finite or countable collections of in that cover .

Can we “Measure” (Outer Measure) any ? Not necessarily

Denote to be the collection of all measurable sets where we say that is measureable when

Caratheodory Extension Theorem

Caratheodory Extension TheoremLet be a ring on and be a pre-measure, then extends to a measure on .

Note

- is the -field comes from extend by including coutable unions and itself.

- The “corret” extension is the outter measure .

ProofAssume and

- Prove stuff about

as is a pre-measure

is non-negative as is non-negative

is monotone (increasing)

Let and then for any s.t. . Then thus

is countably sub-additive

For and a given , let for s.t.

This is possible because is the infimum. As and as is monotone and is sub-additive, then

Take to get that is countably sub-additive

- Check that and coincide for all

This implies that because the right hand side is the cover of A.

- For any we have because is a cover of and is the infimum

- For the reverse, if then by countable sub-additivity and monotonicity

- Thus

- Check that the ring (all measurable sets)

- i.e. we want to show that is -measurable:

- Note as is sub-additive.

- Next, for some , choose s.t. and

- Furthermore, and thus and take .

- Show that is a -Field

- since

- since

- is closed under coplimentation since which implies that

- is closed under finite intersections since for and any thus . Properties above show that is a Field.

- To get to a -Field, let in be countable and parwise disjoint and . Let , then Then by monotonicity and sub-additivity and let , we get Thus is closed under countable unions ( is a -Field!). Choose we have which means is countably additive.

- Conclusion: is a set function and it’s also a measure on . Since , then . Lastly, as is a measure on , it is also a measure on .

-System and -System

-systemA collection of sets is a -system if

- , .

-systemA collection of sets is a -system if

- If and then .

- If is a sequence of pairwise disjoint sets in then .

Note : a Field is a -system

ExampleLet and contains all subsets with an even number of elements. Then is a -system but not a -Field since but

Dynkin - Theorem

Dynkin - TheoremLet be a -system, be a -system and . Then .

ProofLet be the smallest -system such that , then . Our goal is to show that is also a -system and a collection of sets that is both a -system and a -system is a -Field. Then we necessarily have that .

We only need to show that is closed under intersections.

Let then as is a -system and let’s show that is also a -system:

- as

- If s.t. then for any we have that . Thus , which implies that

- If are pariwise disjoint, then for any , thus . This implies that . Hence, .

By definition of , but is the smallest. Thus and is a -system. Therefore, contains all intersections with elements of .

Lastly, let since , . Then do the same thing we did above to to show that is a -system and thus .

Therefore, is closed under intersections and thus a -Field. This implies that .

Uniquessness of Extension Thereom

Uniqueness of ExtensionLet be -Finite measures on where is a -system. Then, if then and are equal on .

Proof (Finite Measures)Assuming

Let and we only need to show that is a -system because since , by Dynkin - Theorem, which means coincide on .

- If with then hence

- are pairwise disjoint and hence .

is a -system!

Proof (-Finite Measures)

For any s.t. , we define to be all s.t. .

Proceeding as in the proof above, we can show that is a -system and thus .

By -Finiteness we decompose , and . For any and any

here we use inclusion-exclusion formula. This also works for .

Since is a -system, as well as futher intersections, thus

Let we get

Borel -Field

Lebesgue Measure

DefinitionFor any interval in , the Lebesgue measure is defined as .

Are there any s.t. is not -measurable? No

Example: Vitali Set

Let , for define addition mod

Define to contain all -measureable sets s.t. for any , where (shift by ) then we claim that is a -system.

Since is the set contains all intervals , we have because and .

By Dynkin - Theorem,

i.e. Every Borel subset of is shift invariant w.r.t

Next, we say that if then we can decompose into disjoint Equivalence classes.

Define s.t. contains one element from each equivalence class. (We can do this because of the Axiom of Choice) Then no two points in are equivalent. i.e. then for

Thus and by countably additivity

since is traslation invariant, .

- If , then .

- If , then

which yields a contradiction. Thus is not -measurable and we call it Vitali Set.

Fun fact: Lebesgue Measure on is the only translation invariant measure.

- Same for

- There is no -dimensional Lebesgue Measure. i.e. No traslation invariant measure.

Product Measure

DefinitionFor two Measure Spaces and , define where for and .

Question : How are these related? and

From Dudley 4.1.7 , but these two are “usually” equal to each other. e.g.

Independence

Independence for setsFor a countable collection of sets , we say that the collection is independent if for all finite subsets , we have

For a countable collection of -Fields , we say that this collection of -Fields is independent if any set of sets is independent in the sense of the previous definition

TheoremLet be -systems. If for any and , then and are independent.

ProofFor a fixed , we can define two measures for as

By assumption, for any . Hence, by Uniqueness of Extension Theorem, they must coincide on . Therefore, for a fixed and any .

This argument can be repeated by fixing an element to get that for .

Functions, Random Variables and Integration

Simple Functions and Random Variables

Simple Random VariableLet be a Probability Space i.e. . A simple random variable is a real valued function that only takes on a finite number of values and such that the set

One way to write such a function is to finitely partition into disjoint sets i.e. and and write

Then we can say that the probability that is equal to is

Furthermore, this allows us to define the expectation of the simple random variable to be

Simple Measurable FunctionLet be a Probability Space. A simple function is s.t.

which is the linear combination of indicator functions. The sets need not be disjoint, but given a simple function, we can define it in terms of disjoint .

Then, we define the integral of a simple function to be

Measurable Functions and Random Variables

To extend the above idea of a simple random variable, we want to replace the finite with any Borel set . We need two Measureable Spaces and .

Measurable FunctionA function is said to be measurable (with respect to ) if for any . If , then we say that is a -measurable.

Typically, the -Fields of interest are the Borel -Fields and it is sometimes writen as when we have a topological space. Moreover, the space is typically taken to be or . In this case, we say that is Borel Measurable.

If we replace with , the set of Lebesgue measurable subsets of , then we say is Lebesgue Measurable.

Cool facts about Measurable Functions

Inverse images of set functions preserve set operations. i.e. for and ,

For a measurable set function , this implies that is a -Field and is contained in . Hence, we want to be no larger than to have measurable functions. Furthermore, this can be used to show that the measurability of can be established by looking only at a collection of sets that generates .

Examplelet be the set of all half-lines for will generate . Thus, is measurable as long as the sets are measurable.

For any , the indicator functions are measurable. The -Field generated by is simply .

For measurable functions , the functions and are measurable.

For measurable functions, from to , the following are also measurable: , , , and if it exists.

ProofIn set notation, where the righthand side is a countable intersection of measurable sets and hence measurable. Similarly, and and . If exists then .

Let be a continuous function, then it is measurable.

ProofIf is an open set in , then is open in (definition of a continous function between two topological spaces). Thus, the set is measurable. Since the open sets of generate , the function is measurable.

Given a collection of functions , we can make them measurable by constructing the measurable space where is the -field generated by the sets for all and .

Almost Surely / Almost EverywhereLet be a measure space. For two functions , we say that a.e. (almost everywhere) when the set has measure .

In probability theory, “almost everywhere” is replaced with “almost surely” abbreviated a.s. and it is equivalently is written “with probability 1” or w.p.1.

ExampleLet be the standard measure space of Borel sets on the unit interval with Lebesgue measure. Let for all and on and on .

Then we have a.e. that is . To prove that , we enumerate each rational number and surround each with an interval . For , . Since is arbitrary, we have .

Integration

We will consider measurable functions mapping from to , which is called the extended real line. This allows us to handle sets such as .

Notation :We use to represent a sequence of functions that are increasing and converge to for every . This implies that and for all .

TheoremLet be a measurable space and a -system that generates (). Let be a linear space that contains

- all indicators and for each

- all functions such that s.t.

Then contains all measurable functions.

ProofFirst, . Let , we claim that is a -system. Since , by Dynkin - Theorem, . By the definition of , we have that , thus , so every indicator function is in .

Let be a non-negative measurable function, we can define . Each is a finite linear combination of indicator functions and hence . Furthermore, and thus .

Lastly, for a general measurable , we write where

which are both non-negative measurable functions.

Integral of a Measurable FunctionFor a non-negative measurable function on the measure space , we define

where the supremum is taken over all finite partitions of .

Inside the square brackets is the integral of the simple function that assigns a value of for the set A_i. Hence, for a non-negative , we consider all simple functions such that and define the integral to be the supremum of the integral of . To extend this to all measurable functions , we write then

Let be a measure space, and let be measureable, and be a sequence of measureable simple functions such that . Then, .

ProofSince and is integrable, then and . To prove the other side of the inequality, let be any simple measureable function s.t. . Our goal is to show that as this will imply that .

First, we write where are disjoint partition of and similarly . Consequently,

and . Hence, we want to show that for each that

First, if , then the eqaulity above must hold. If , we can divide by and without loss of generality, we take and consider for some set .

For any , let . Then , which means

By countable additivity of

and . Since , we have that . Take to zero gives .

Let be a measure space, and let be measureable and a.e. Then, is integrable if and only if is integrable. If and are integrable, then .

ProofLet on where . For any measureable function , we can consider when coincide.

- True if is an indicator function because

- Also true for being a non-negative simple function.

- From MCT for Simple Functions, we can take non-negative simple functions to any non-negative measureable function.

- Lastly, write shows that the integrals will coincide for any integrable measureable function.

To complete the proof, we note that

This result basically tells us that we can modify functions on a set of measure zero without breaking anything.

Monotone Convergence Theorem

Monotone Convergence TheoremLet be a measure space and let be measurable functions from to such that -a.e. and . Then . (This means that we can swap the limit and the integral)

ProofFirst, we need to check that is measureable. For , we consider the sets , which generate the Borel -Field. Since , and , thus is measureable. (by the first cool fact about measurable functions)

Next, assume that , and for each we take simple functions such that as . Thus, by MCT for Simple Functions, . Furthermore, let . These are simple functions and . Once again, MCT for Simple Functions implies that . But since by construction, . Thus, . This means we are done for non-negative fucntions ().

Now, we assume that . In this case implies that . Let and , we have . Next, note that . Applying the above result gives that . Since all of the have finite integrals, we are allowed to subtract to get that and thus .

For a general function , we have and and . So by the above special cases, and . Finally, .

We only require to hold almost everywhere to establish the result. Hence convergence can fail on a set of measure (probability) zero and we still have convergence of the integrals.

Secondly, we can redo the above proof for with to get a similar result for decreasing sequence.

Fatou’s Lemma

Fatous’ LemmaLet be a measure space and let be non-negative measurable functions from to . Then,

ProofRecall that . Hence, let . Then, and by assumption. So MCT for Simple Functions says that . By construction, for any , thus for any and subsequently, . Taking gives .

Dominated Convergence Theorem

Dominated Convergence TheoremLet be a measure space and let and be measurable functions and absolutely integrable. If for all and for each (i.e. pointwise convergence), then is absolutely integrable and .

ProofLet and . Then . We have that and . So MCT for Simple Functions implies that .

Do the same for , we have that and hence that . Since , we have the desired result that .

Lebesgue-Stieltjes Measure

Let and be two measurable spaces. Let be a measurable function and be a measure on . Then we can define to be a measure of . This allows us to turn Lebesgue measure into Lebesgue-Stieltjes measures.

TheoremLet be non-constant, right-continuous, and non-decreasing. Then, there exists a unique measure on such that for all with ,

ProofLet and . We define an open interval and define . We want to define to be where is Lebesgue Measure on , so we need to show that this makes sense.

We first show that is left-continuous and non-decreasing and for and if and only if . To show this, fix a and let . As is non-decreasing, if and then . As is right-continuous, if and then . Therefore, and if and only if . Secondly, for , we have that and thus . So if , then and thus , which implies that is left continuous and non-decreasing.

From the above, is Borel Measurable and thus defining gives us that

Furthermore, this measure, , is unique by using the same arguments as before for Lebesgue Measure.

In the case that such that the interval , we have a cumulative distribution function, which induces a measure on the real line. This allows us to do things like integrate with respect to such measures——i.e. take an expectation.

Radon Measure

DefinitionLet be a measure space where is the Borel -field. The measure is said to be a Radon Measure if for all compact .

is a Radon Measure.

Every non-zero Radon Measure on can be written as for some .

If is a Radon Measure on , then we can define as

Thus, for and hence by uniqueness.

Product Measure

DefinitionGiven two -fields and , we define the product -field to be , which is the -filed generated by the rectangle where and . The collection of all rectangles will be denoted as

Monotone Class

DefinitionA collection of subsets of is said to be monotone if

- for s.t. and , then .

- for s.t. and , then .

Note that if a field is also monotone, then it is a -field (by definition of -field.)

Let be a field and be monotone such that . Then,

ProofThis proof is similar to the one of Dynkin - Theorem.

Existence and Uniqueness of Product Measure

Existence and Uniqueness Theorem of Product MeasureLet and be -finite measure spaces. We denote the product -Field to be . (the cartesian product of two -Fields may not be a -Field) Let be a set function on such that for and , . Then, extends uniquely to a measure on such that for any ,

LemmaLet and be finite measure spaces, and let

Then . ( This means that )

ProofLet for and , i.e. . Then,

Therefore, .

Also, for disjoint , . Hence, contains finite disjoint unions of . This implies that the field generated by the set of rectangles . (Dudley 3.2.3)

Next, consider . Then if , then MCT says

and

Thus, and the same holds if . Therefore, is a monotone class. Finally, applying the Monotone Class Theorem shows that .

Proof : Existence and Uniqueness of Product MeasureFirst, we consider the case that and are finite measures and . Then we extend to a set function

for any . The above lemma says that the definiton makes sense and the order of integration can be reversed for any . Linearity of integral implies that is finitely additive. Apply MCT shows that is countably additive.. Thus, is a measure on .

To show that is unique, let be some other set function such that for . Let . Then, is a monotone class because for , we can rewrite where and for are disjoint. So by countable additivity, and we can do the same for . Thus, by Monotone Class Theorem . Therefore, is unique on for finite measures and .

Now let and be -finite measures. Let and be disjoint partitions of and , respectively, such that and . Then, for any , we define . From the above finite measure case,

Sum over all and and apply MCT again to get

Futhermore, Monotone convergence implies that is countably additive and hence a measure on . For any other measure such that , countably additivity and uniqueness for finite measures implies that

Hence, the extension of to is unique.

Fubini-Toneli

Fubini-Toneli TheoremLet and be -finite measure spaces, and let be measurable with respect to such that either (non-negative) or (absolutely integrable). Then,

Also, is -measurable and is -measurable.

ProofWe have this for indicator functions from Existence and Uniqueness Theorem of Product Measure and thus the result for simple functions as integrals are linear.

Then, applying MCT to simple functions gives us that the above holds for non-negative measureable functions.

Instead, assume . Then, we can write and the above holds for and separately. i.e. for -almost-every and for -almost-every and similarly for . Therefore,

and thus,

As we only require finiteness to occur almost everywhere to have the integral exist, we can integrate both sides of the above with respect to to get

Do the same swapping and to conclude the theorem.

The above theorem lets us swap the order of integration for the product of two measure spaces. This can be extended by induction to the finite product of measure spaces.

Probability Theory

Spaces

SpaceLet be a measure space and a measurable function, then we say , for if

For , we say if

This definition allows us to write down the norm, which is defined as:

Markov / Chebyshev and Jensen’s Inequalities

Markov’s InequalityLet f be a non-negative measurable function and . Then, denote ,

ProofNoting that , then by monotonicity of the integral,

which proves the theorem.

There are two useful ways to use Markov’s inequality:

- Chebyshev’s Inequality: For measurable and ,

- Chernoff’s Inequality: For measurable and , In probability theory, the right hand side becomes the moment generating function or the Laplace transform.

Convex Functions onLet be an interval. A function is convex if for all and all ,

Let be a probability space and an integrable random variable ( i.e. a measurable function in ) such that . For any convex ,

which is

ProofFor some , if , -a.e., then the result is immediate. Otherwise, let be the mean of , which lies in the interior of interval. Then, we can choose such that for all with equality at . Then, , and

Lastly, to check that is well defined (i.e. not ), we note that where is concave and . Hence, .

Hölder and Minkowski’s Inequalities

Hölder’s InequalityLet be conjugate indices ( i.e. ) and and be measurable, then

ProofIf either or , then the result is immediate. Hence, for such that , we can normalize and without loss of generality assume that . Then, we can define a probability measure on such that for any ,

Then, using Jensen’s Inequality with and ,

From Hölder’s inequality with , we can derive Cauchy-Schwarz Inequality:

Cauchy-Schwarz InequalityFor measurable and ,

Let and , be measurable functions, then

ProofIf either or or , then we are done. If , then and the result follows quickly. For and conjugates, we note that

Then using the above equality, we have

Divide both sides by finishes the proof.

Approximation TheoremLet ) be a measure space, and let be a -system such that and for all and there exists . Let the collection of simple functions be

For and for all and all , there exists a such that .

ProofFor any . Thus for all . Since is a linear space, .

Next, let be all that can be approximated as above by some and . Let be approximated by then by Minkowski’s Inequality,

Hence, is also a linear space.

Now, assume ( i.e. ). Let , which we will show is, in fact, a -system. We know that and thus . For such that then since is linear, so . Lastly, for pairwise disjoint with , let and . Then, and . Therefore, , and thus is a -system. By Dynkin - Theorem, and thus for any . Therefore, for any non-negative , we can construct simple functions such that . Then pointwise and . Hence, by Dominated Convergence Theorem, . Thus and by the linearity of , .

Lastly, for general , we have by assumption a sequence . Hence, for any , we have that and similarly to above, pointwise and . Therefore, by dominiated convergence. Thus, .

Convergence in Probability & Measure

Convergence of Measure

Weak Convergence of MeasureLet be a metric space and be the Borel -Field on . Then, for a measure and a sequence , we say that converges weakly to , i.e. , if

for all , all continuous bounded real-valued functions on .

For and on a metric space , the following are equivalent:

- for all bounded uniformly continuous functions

- for all closed sets

- for all open sets

- for all sets with

Proof

(1)(2) If convergence holds for every then is certainly hold for all bounded uniformly continous .

(2)(3) For any closed and there exists a such that for , we have as as . Then, we can define an such that on , on . Then is uniformly continuous (by Urysohn’s Lemma) and Then, by (2), we have that

Thus, taking the and to zero gives

(3)(1) Let . Our goal is to show that and similarly for to show (1) holds. As is bounded, we can shift and scale it, and without loss of generality, we assume that . Then, for any choice of , we define nested closed sets for all and cut into pieces to get

Also, , the above becomes

Thus,

Taking gives . Replacing with gives . Thus the and coincide proving that (3)(1).

(3)(4) Let be the complement of . Then, Thus, is equivalent to .

(3)(4)(5) For any set with , is an open set and is a closed set. If (3) and (4) hold, we have that

Since , we have that . This is equivalent to , which is (5).

Convergence of Random Variables

In contrast to convergence of measures, let be a probability space and be a metric space with Borel sets as above. Then, for a random variable (i.e. measurable function) , we can define a probability measure

This is the distribution of . Then the expectation of a random variable can be written in multiple ways due to change of variables:

Note that above Portmanteau Theorem can be rephrased for random variables as well.

Convergence in DistributionFor a sequence of random variables , we say that converges to in distribution ( denoted ) if .

Convergence in ProbabilityFor a sequence of random variables , we say that converges to in probabilty ( denoted ) if for all

In short, .

This means that the measure of the set of where and differ by more than goes to zero as . Convergence in probability is closely connected to the metric on .

Convergence Almost SurelyFor a sequence of random variables , we say that converges almost surely to ( denoted ) if

i.e. pointwise convergence almost everywhere.

Convergence inFor a sequence of random variables , we say that converges to in if

Here, we can think of as a function from to . In the case that we have real valued random variables, i.e. , then this is

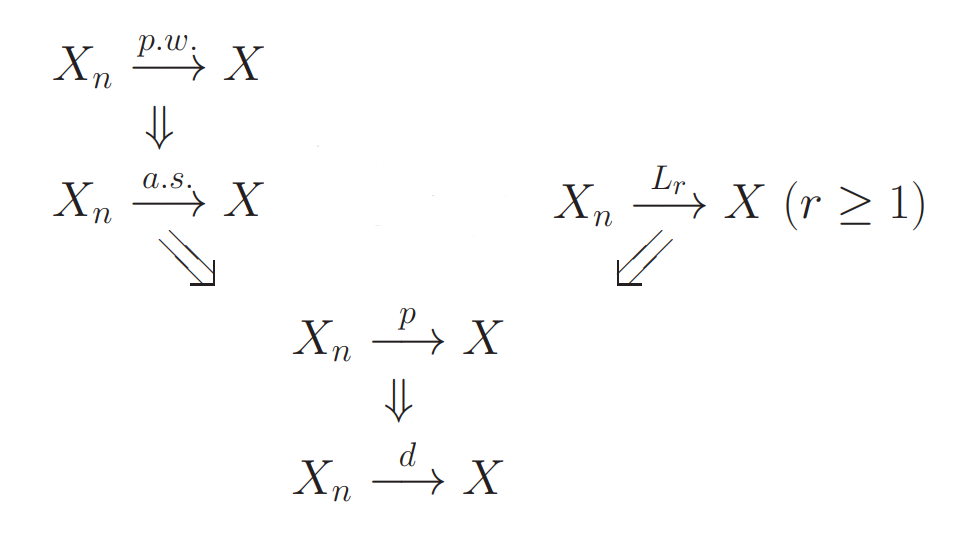

Hierarchy of Convergence Types

- Conv a.s. conv in probability

- Conv in probability conv in distribution

- For , conv in conv in

- For any , conv in conv in probability

Borel-Cantelli Lemmas

Let be a probability space. For , then we define

The set is sometimes referred to as infinitely often or i.o. This is because implies that for any there exists an such that . Similarly, some write eventually or ev. for . This is because for then there exists an large enough such that for all .

Let with . If , then .

ProofAs the summation converges, then the tail sum has to tend to zero, we have simply that

as .

Let be an independent collection with . If , then .

ProofNote that for all and we have that the independence of the implies the independence . Therefore, for any and ,

Taking takes the right hand side to zero. Hence, for all . Thus,

which is the desired result.

Law of Large Numbers

Let be random variables from to . Hence, for any , we write

and . Furthermore, we define the partial sum , which is also a measurable random variable.

IndependenceFor random variables and on the same probability space but possibly with different codomains, and respectively, we say that and are independent if

for all and .

This definition can be extended to a finite collection of random variables implying

for all . We say that an infinite collection of random variables is independent if all finite collections are independent.

Note that since is shorthand for , random variables and are independent if and only if the -fields and are independent ( defined in here ).

Identically DistributedFor , the distribution of is the measure induced by on , i.e., for . We say that and are identically distributed if and coincide almost surely.

Weak Law of Large Numbers

Weak Law of Large NumbersLet be a probability space and be random variables (measurable functions) from to such that and for all and for all . Then .

ProofWithout loss of generality, we assume . Otherwise, we can replace with . Then, for any , Chebyshev’s inequality implies that

as .

Note that in the above proof, we only require that the be uncorrelated (i.e. ) and not independent. In the next theorem, we require independence, but remove all second moment conditions.

Strong Law of Large Numbers

Strong Law of Large NumbersLet be i.i.d. random variables from to .

- If , then does not converge to any finite value.

- If , then for .

Proof

Assume that but also that , and note that . Since , then and 2nd Borel-Cantelli Lemma says that for infinitely many . Thus

Thus .

Assume that . Without loss of generality, we assume for all . Otherwise, we can write and independence of and implies independence for and . Also, we use to denote the distribution of , i.e. .

We define and . For any , we can define a non-decreasing integer sequence . Then and . Therefore,

for some constant . We also note that . By Chebyshev’s inequality, , depending on and such that

And thus . Hence, by 1st Borel-Cantelli Lemma, . Since , we have that and in turn that .

To get back to and , we note that if and only if . Thus and 21stnd Borel-Cantelli Lemma says that so for large enough, a.s. We define “large enough” to be ( i.e. ) Furthermore, and as , meaning that the contribution of the terms where and may not coincide becomes negligible. Hence, , so we have almost sure convergence of a subsequence.

Finally, since , there exists an large enough such that . Thus, for ,

Thus, taking concludes the proof.

Central Limit Theorem

Gaussian Measure onA Borel measure on is said to be Gaussian with mean and variance if

Gaussian Measure onA Borel measure on is said to be Gaussian if for all linear functionals , the induced measure on is Gaussian.

Gaussian Random VariableA random variable from a probability space to is said to be Gaussian if is a Gaussian measure on.

Characteristic FunctionFor a probability measure on , the characteristic function (Fourier transform) : \mathbb{R}^d \rightarrow \mathbb{C}$ is defined as

We can also invert the above transformation. That is, if is integrable with respect to Lebesgue measure on , then

ConvolutionFor two measures and on , the convolution measure is defined as

for all where .

Note that the convolution operator is commutative and associative. Also, for two independent random variables and with corresponding measures and , the measure of is .

Uniquesness of Characteristic Function TheoremLet and be probability measures on . If then .

ProofLet be a mean zero Gaussian measure on with variance . We denote and similarly for . It can be shown that the corresponding density functions for and are

Since , we have that for all .

Let be a random variable corresponding to and to . Then, the measure is paired with the random variable . Thus as , that is, pointwise for almost all . Thus, this convergence holds in probability and thus in distribution, i.e as .

Lastly, we have that and . Since the limit is unique .

Central Limit TheoremLet be a probability space, be i.i.d. random variables on such that and . Let . Then, where is a gaussian random variable with zero mean and covariance with th entry .

ProofAs the random vectors are mean zero and independent for . In turn, for any ,

For any , there exists an such that . Thus, from Chebyshev’s inequality, we have that . This implies that the sequence is “uniformly tight.

For a vector , the random variables are i.i.d. real-valued with and . Let be the characteristic function of .Then, and and Thus, by Taylor’s Theorem, we have

Thus, for any fixed vector ,

as . Thus, by the Uniqueness of Characteristic Function Theorem, we have that where is a Gaussian random variable with zero mean and covariance .

Erogodic Theorem

Measure Preserving MapLet be a measure space. A mapping is called measure preserving if

Invariant Set and FunctionFor a mapping ,

A set is -invariant if . The set of all -invariant sets forms a -field .

A measurable function is -invariant if . is -invariant if and only if is -measurable, i.e.

Ergodic MapA mapping is said to be ergodic if for any ,

Example

For Lebesgue measure on , two examples of measure preserving maps are the shift map

and Baker’s Map

Furthermore, it can be shown that

- If is integrable and is measure preserving then is integrable and

- If is ergodic and is invariant, then -a.e. for some constant .

Birkhoff and von Neumann’s Theorems

In what follows, we let be a measure space, be a measure preserving transformation, a measurable function, and

where .

Maximal Ergodic LemmaLet be integrable and = (element-wise maximum). Then,

ProofLet and . Then, for ,

Furthermore, on the set ,

On the set , since and we have that Thus, integrating both sides of the above gives

Since is integrable and is measure preserving, . Thus, we have . As , we have that

due to Dominated Convergencee Theorem with as the dominating function.

Let be a -finite measure space and . Then, there exists an -invariant such that

and as -a.e.

ProofBoth and are -invariant. Indeed, Thus, we can define a set for

which means that the and are separated, and this set is -invariant. The goal of the proof is to show that . Without loss of generality, we take . Otherwise, and we multiply everything by .

For some such that , then we set . Function is integrable and for each , there is an such that since . Thus, and the Maximal Ergodic Lemma says that

As is -finite, there exist such a sequence of sets such that and for all . Thus,

This implies that . Redoing the above argument for and results in . Therefore,

and since , we have that .

Next, let

Then, is -invariant as the and are. Furthermore, . Thus, .

This means that converges in on . Therefore, we define

Lastly, ( is measure preserving )and thus for all . Applying Fatou’s Lemma gives

finishing the proof.

Let and . Then, for all , there exists an such that and

Proof

We begin by noting that

By the above and the Minkowski’s Inequality, . Since , given a , we can choose a such that with

i.e. is bounded above and below by and . By the Birkhoff Ergodic Theorem, -a.e.

Next, we note that for all , and thus by (Dominated Convergence Theorem)[#dominated-convergence-theorem], there exists an such that for all ,

Applying Fatou’s Lemma gives that

Thus, for ,

Since , the convergence must hold in as well. Thus, we have

which gives the desired result.

Law of Large Numbers, Again

Let (Ω, F, P) be a probability space with i.i.d. real-valued random variables with distribution function . We define a map by

Let be the corresponding probability measure on .

Because the variables are independent, has the form , and because they are identically distributed, all the marginal distributions are the same, so in fact for some probability distribution on .

For a sequence , we can define the shift map to be

Then the shift map is measure preserving and ergodic by Kolmogorov’s zero-one law.

Strong Law of Large Numbers, AgainLet be i.i.d. random variables from to . If , then for .

Proof

Let by taking the first coordinate, that is, for , . Then, for being the shift map and , we have

Thus, von Neumann’s Ergodic Theorem says that there exists an invariant such that

for and

Since is ergodic, the result from the beginning of this section states that , a constant, almost surely. Thus,

gives the disred result.